Diving into the ZDepths

What is a depth map?

According to wikipedia, a depth map is an image or image channel that contains information relating to the distance of the surfaces of scene objects from a viewpoint. In other words, in a ZDepth map objects wich are near will appear white while objects which are far from the camera will appear darker. This values can also be inverted, being white objects far and black objects nearer to the point of view.

What can I do with it?

- DOF, objects which are far from the camera usually appear out of focus. With a depth map we can tell our compositing program which objects have to be blurred more.

- Fog and volumetric effects, imagine you want to make a post-apocalyptic street. Smoke and fog is everywhere. Far on the street, though, more fog is needed, because the further light travels, the more fog it encounters.

- Color Correction, objects which are really far away are tinted like the sky. The depth mask can be used as a mask for color grading those objects.

- Scene Relightning, a sharp 3D geometry of the shot can be used for re-illuminating parts or the entire scene.

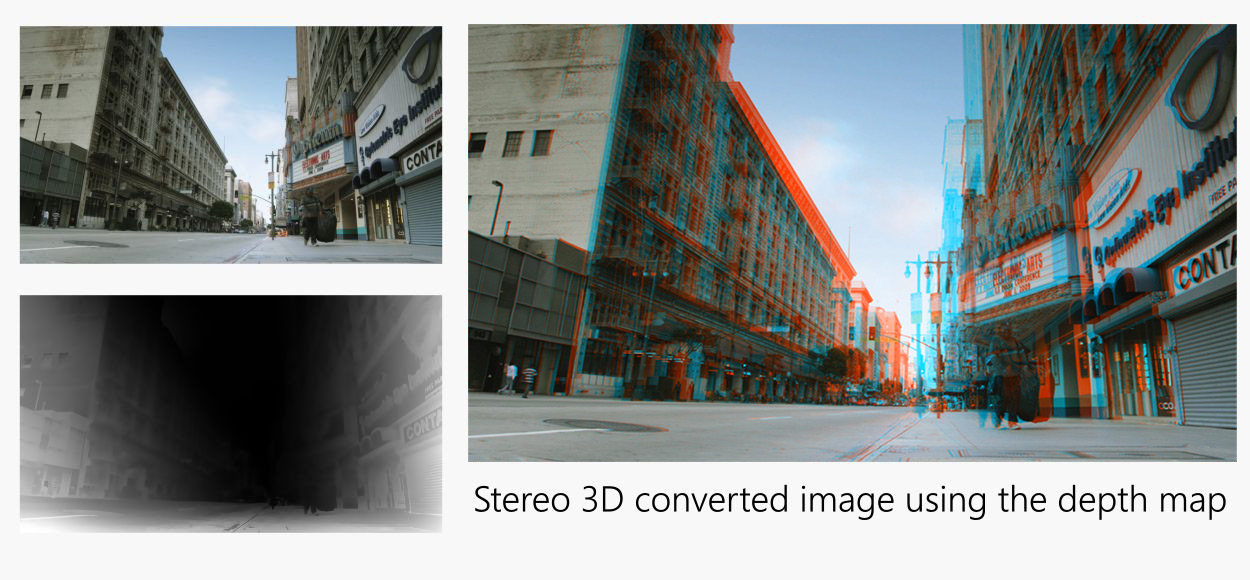

- 3D stereo conversion, because Z is equivalent to the third dimension, they can be used to add parallax or the sense of depth in an image. So go and find some 3D glasses, you are going to need them!

- Depth mattes, do you need an specific effect to appear only in a range of distance? What other uses can you find to this?

Creating the illusion

Though some cameras are starting to implement the option of recording depth information, we are still far from implementing depth mattes to the compositing workflow. But that is no reason why we cannot try to simulate that depth information. But you must know this is far from being a real solution to the problem. We are just emulating what we are needing, and it will not always work as expected.

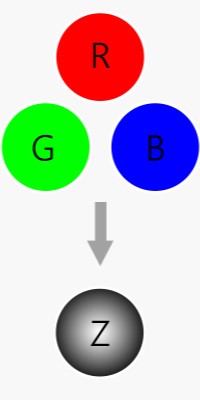

What ha’ve been working on is what Charles Bordenave called a “pseudo-effects” for After Effects. This more than just a regular preset, but not as independent from the program as a plugin would be. The idea is to process the RGB channels looking for contrast between different planes, and giving each plane a value in space. This process removes textures and specular lights leaving behind only the important information that will be applied as the user wishes.

The UI is quite simple, everything is categorized in groups and all the controls are intuitive and self-explanatory. There are 3 different ramps which can be used for giving the program a basic idea of how perspective works on the image, plus a circular ramp that can be used for setting the vanishing point of the shot. The general controls allow to tweak how will the algorithm affect the images, specially for texture cleaning purposes. Finally, in the last version I started to implement basic 3D stereo conversion features.

A video showing the development of the effect

2D to 3D conversion

The image above was converted to 3D stereo using my pseudo-effect. First I created the depth map as shown on the video, and then I activated the newest feature which creates parallax and creates the 3D look. The effect really accomplishes its job, but it can sometimes distort elements and sometimes it misunderstands the perspective of the image. I still have some ideas that may improve the quality of the effect, but I still have to implement them into the overall effect.

This is not meant to be a 3D conversion tool (it’s too simple and many problems appear), but it can be useful for steady shoots and as a way of intuitively checking if the depth map works or not.

The future of 3D conversion

For creating this feature for my pseudo-effect I was inspired by the 3D conversion done in “Gravity”. This amazing work done shows that the future of 3D movies is heading to the 2D shooting and 3D conversion in postproduction. Of course, all the process wasn’t as procedural as my pseudo-effect. There was a lot of roto work to be done and great attention was put into detail. Yet, you have more control than you would have with a 3D stereo rig camera on set, specially on a movie such as Gravity with long shots and complex camera movements. A 3D camera would have imposed many problems and limitations on set that directors surely want to avoid.

The future of my pseudo-effect

Sincerely, I am not sure what should I do with this project right now. Right now it is quite unstable and incompatible in some machines. I do not master Java neither Python, and many parts of the code depend on third person’s job. This is not the kind of stuff you could sell right away. Perhaps when I am less busy I will try to contact someone who can help me to make this effect compatible, universal and “slow machine friendly”. I will keep posting news about further development of this new tool. So,

to be continued…